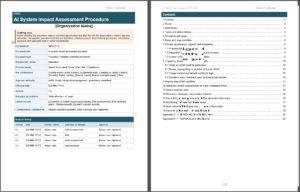

AI System Life Cycle Explained – ISO/IEC 42001:2023 A.6/B.6

This comprehensive guide covers the objectives (6.1.1 & 6.2.1), best practices for each control (6.1.2 through 6.2.8), and tips on designing, testing, documenting, and managing AI systems ethically and effectively throughout their life cycle.

Navigate

ISO/IEC 42001

Templates & Tools

A.6 / B.6 – Explaining ISO 42001 (Annex A & B) AI System Life Cycle

The AI system life cycle controls address nine specific areas (Controls 6.1.2 through 6.2.8).

First, they guide organizations to set clear objectives and processes for responsible AI design and development. Then, they define requirements for each major life cycle stage: from specifying AI system requirements and documenting design decisions, to verifying/validating the system, planning deployment, monitoring ongoing performance, maintaining technical documentation, and logging important events.

Implementing these controls end-to-end helps ensure AI systems are not only effective, but also safe, fair, transparent, and compliant throughout their lifespan.

Objective – A.6.1.1 / B.6.1.1

To ensure that the organization identifies and documents objectives and implements processes for the responsible design and development of AI systems.

In simpler terms, your organization should establish what “responsible AI” means in concrete goals (e.g. fairness, transparency, safety) and build those goals into your development processes (Objective 6.1.1).

Control 6.1.2 – A.6.1.2 / B.6.1.2

Objectives for Responsible AI System Development

Control 6.1.2 is about defining what “responsible AI” means for your organization and each AI project, and ensuring those goals shape the development process from start to finish.

Identify and document objectives to guide the responsible development of AI systems, and integrate measures to achieve these objectives in the AI development life cycle.

Explanation & Implementation Guidance

Control 6.1.2 is about defining what “responsible AI” means for your organization and each AI project, and ensuring those goals shape the development process from start to finish.

How to implement “responsible AI”:

Define Clear AI Objectives

Identify the ethical, safety, and performance objectives that each AI system should meet. These should align with your organization’s values and AI policy (e.g. fairness, transparency, privacy). For example, if fairness is a stated principle in your AI policy (see Control 2.2: AI Policy), then one development objective might be “ensure the AI model’s decisions are free from unfair bias across demographic groups.” List out such objectives (fairness, explainability, security, etc.) for every AI project.

Refer to Annex C of ISO 42001 for common AI governance objectives and risk areas – such as accuracy, accountability, non-discrimination – that can help you set your AI development goals.)

Incorporate Objectives into Requirements

Once objectives are defined, bake them into the AI system’s requirements and design criteria. For instance, if an objective is transparency, include a requirement that “the AI system must provide an explanation for its outputs that is understandable to users.” If safety is an objective, specify performance thresholds or fail-safes the system must meet (like “the self-driving AI must detect obstacles with at least 99% accuracy to avoid collisions”). By translating high-level principles into concrete requirements, you ensure they influence the system architecture and functionality from the outset.

Integrate into Development Processes

Ensure these responsible AI objectives are considered at each stage of the development life cycle.

For example, during data acquisition and preparation, use only approved, high-quality data sources that support your objectives (e.g. using diverse datasets to fulfill a fairness objective – see Control 7.3: Acquisition of Data for guidance on approved data sources). During model training, integrate measures like bias detection or fairness metrics to achieve the fairness objective. During testing, include test cases that validate each objective (such as checking that explanations generated by the system meet transparency criteria).

Essentially, embed checks and balances for your objectives into design reviews, data selection, model evaluation, and validation steps.

The organization may mandate specific tools or methods here – for instance, require use of a bias evaluation toolkit if fairness is an objective, or a robust explainability technique if transparency is an objective.

Assign Responsibility and Track Compliance

Document who is responsible for enforcing each objective in the development team. You might require developers or an AI ethics reviewer to sign off that an objective has been addressed at key milestones (e.g. “Architectural design reviewed for security by Security Team – OK” for a security objective). By making someone accountable for each objective, you create ownership. Also, maintain records (in design documents, testing reports, etc.) showing how objectives were accounted for. This provides evidence for internal audits or external compliance checks that you followed ISO 42001’s intent.

This proactive approach results in AI systems that are aligned with ethical standards and stakeholder expectations from day one, reducing the need for costly corrections later.

Control 6.1.3 – A.6.1.3 / B.6.1.3

Processes for Responsible AI System Design and Development

Control A.6.1.3: Define and document specific processes for the responsible design and development of AI systems.

Explanation & Implementation Guidance

Control 6.1.3 requires your organization to establish a formal AI system development process (or methodology) that embeds responsible AI practices.

In other words, beyond just having objectives, you need a well-defined process or workflow that developers must follow to ensure those objectives are achieved. Key elements to include in your AI development process are:

Life Cycle Stages and Phase Gates

Clearly delineate the stages of your AI system life cycle and what activities occur in each stage. For example, stages might include Concept & Feasibility, Requirements & Design, Data Acquisition & Preparation, Model Development, Testing & Validation, Deployment, and Operation & Monitoring.

For each stage, set entry/exit criteria or “stage gates” – i.e., what must be completed and reviewed before moving to the next stage. This could include management approvals or committee sign-offs, especially for high-risk AI applications. Defining stages (you can customize or adopt models like the one in ISO/IEC 22989) ensures a structured approach.

For instance, you might require an AI System Impact Assessment (as per Control 5.2) to be performed during the design stage or before deployment, to evaluate potential impacts on individuals and society. By scheduling such assessments at specific points, you integrate ethical risk checks into the development timeline.

Testing and Validation Requirements

Your process should specify when and how testing is done throughout development (not just at the end).

Include requirements for unit testing of AI model components, integration testing (combining the model with its software environment), and system testing against the defined objectives. Define the planned testing methodologies and tools up front – for example, requiring bias testing during model training (to address fairness), adversarial robustness testing for security, and user acceptance testing before deployment.

If certain tools are recommended (e.g., a specific fairness audit toolkit or security vulnerability scanner for AI), note those in the process documentation. The goal is to ensure quality and ethical compliance are verified at each stage, rather than discovering problems after deployment.

Human Oversight and Involvement

Integrate human oversight checkpoints into the AI development lifecycle. Identify which stages require human review or intervention to maintain control over AI outcomes, especially if the AI system could significantly impact people.

For instance, you might stipulate that “a human domain expert must review a sample of AI outputs for accuracy and bias before any model is approved for deployment.” Or mandate that an ethics committee reviews the design against responsible AI principles at the design stage. Define what tools or processes facilitate this oversight (like code reviews, ethical review boards, or external audits).

Human-in-the-loop processes ensure that automated decisions can be checked and that accountability is maintained.

Data Management Rules

Because data is the fuel of AI, set standards for data usage in development. The process should outline how training and testing data are acquired, and what is acceptable.

For example: only use data from approved sources/vendors (to ensure legality and quality – see Control 7.3: Acquisition of Data), enforce data labeling standards and documentation (see Control 7.6: Data Preparation for how data should be cleaned and labeled), and require assessments of data bias or representativeness (tie-in with Control 7.4: Data Quality).

Also, consider rules for data privacy here: e.g., if personal data is used, ensure privacy impact assessments or anonymization steps are part of the process.

Required Expertise and Training

Define what skills or expertise are needed at each stage, and ensure your process accounts for having the right people involved. For instance, during model development, your team might need a domain expert (to ensure the model makes sense for the business context) in addition to data scientists. If such expertise is lacking, your process might require training or hiring before proceeding. Outline any training programs or competency requirements for AI developers (this ties to human resources management in Control 4.6 – ensuring staff have appropriate AI knowledge). For example, mandate that all AI developers undergo an annual ethics and security training specific to AI, or require certification in AI ethics for project leads. Incorporating these ensures the people driving development are prepared to implement responsible practices.

Release Criteria and Change Control

The process should state what criteria must be met before the AI system can be considered “ready” for deployment. This is sometimes called a “definition of done” for development. It could include achieving certain performance metrics, passing all tests (including safety and fairness checks), completing documentation, and obtaining management approval. List the required approvals or sign-offs (e.g., by the AI project manager, compliance officer, and maybe an executive sponsor) before any AI system goes live. Also, integrate a change control procedure: any changes to the AI system (like model updates or major code changes) should go through a formal review and testing cycle again. This might leverage your organization’s existing IT change management process, adapted for AI.

Essentially, treat AI model updates like software updates that cannot be simply pushed without evaluation – require change requests, impact analysis, re-validation, and approval for modifications. This keeps the AI system stable and prevents “rogue” changes that bypass governance.

Usability and Controllability

Ensure the development process considers how end-users will interact with the AI system and control it. Include steps for usability testing (is the AI integrated into user workflows smoothly? do users understand the AI outputs?) and controllability checks (can humans override or correct the AI when needed?). For example, during design, require a review of the user interface or API through which humans get AI results, to confirm it provides necessary information (like confidence levels or explanations) and allows feedback or intervention.

Engaging some end-users or UI/UX experts at the design and test stages can be very useful. This aligns with human-centered design principles (as noted in ISO 9241-210 for interactive systems) – basically making sure AI tools are useful and manageable by people rather than being black boxes.

Stakeholder Engagement

Finally, plan for engagement of interested parties at appropriate stages.

“Interested parties” could include customers, end-users, regulators, or others who have a stake in the AI system. For high-impact AI systems, your development process might involve early consultations or feedback sessions with stakeholders to surface concerns. For example, if developing an AI for healthcare, engage healthcare professionals or patient representatives when defining requirements or evaluating prototypes.

Your process could formalize this by requiring stakeholder review meetings or including external reviewers in your approval chain for certain projects.

With documenting and following a robust design/development process encompassing all the above, your organization creates consistency and accountability in how AI systems are built.

Everyone from developers to management will know the roadmap: what steps to take, what checks to perform, and who signs off. This dramatically reduces risks, as it’s far less likely that critical considerations (like bias testing or security review) get skipped. In essence, Control 6.1.3 operationalizes “responsible AI development” by turning it into a repeatable, auditable process rather than a vague intention.

Objective – A.6.2.1 / B.6.2.1

Clearly define what must be done at each life cycle phase – from design to decommissioning – so that no stage is left unchecked or unmanaged (Objective 6.2.1).

Define the criteria and requirements for each stage of the AI system life cycle.

Control 6.2.2 – A.6.2.2 / B.6.2.2

AI System Requirements and Specification

Before jumping into building an AI system, it’s crucial to clearly define what the system is supposed to do and what constraints it must abide by.

Specify and document requirements for new AI systems or material enhancements to existing systems.

Explanation & Implementation Guidance

Control 6.2.2 ensures you have a solid foundation by requiring a documented AI system requirements specification. Key best practices for this control include:

Document the AI System’s Purpose and Scope

Begin by clearly answering “Why are we developing this AI system, and what will it cover?” Identify the business case or need driving the project. For example, is it to improve customer service through an AI chatbot? To detect fraud in financial transactions? Or perhaps it’s mandated by a new regulation or a client request? Writing down this rationale grounds the project and helps stakeholders understand its importance. Specify the intended scope of the AI system – what tasks and decisions it will handle, and equally important, what is out of scope. A well-defined scope prevents “mission creep” and ensures everyone knows the boundaries (e.g. “The AI will assist in loan approval decisions for personal loans up to $50,000, but will not make final decisions on commercial loans”).

Include Functional and Non-Functional Requirements

Just like any software system, your AI system needs both functional requirements (what it should do) and non-functional requirements (quality attributes). For functional requirements, describe the system’s behavior in various scenarios. For non-functional requirements, address performance, accuracy, reliability, security, etc., tailored to AI.

For instance, “The model must achieve at least 95% accuracy on the validation dataset” (performance), or “Responses must be generated within 2 seconds” (speed), or “The system must allow for human review of any AI decision flagged as low confidence” (a control requirement). Also incorporate the responsible AI objectives from Control 6.1.2 as requirements – e.g., fairness requirements (“The model’s decisions shall not disproportionately negatively impact any single demographic group, as verified by bias testing”).

By spanning these, you ensure technical specs meet ethical and user expectations.

Data and Training Considerations

A critical aspect to include in AI requirements is how the AI will learn and what data it needs. Document your plans or assumptions about data: e.g., “The system will be trained on at least 100,000 labeled customer support emails”, or “Training data will be sourced from internal historical data and augmented with public datasets XYZ”. If data availability is a potential risk, note it here so it’s visible early (maybe the project depends on buying a dataset or conducting a data collection effort). Also, specify any constraints on data due to privacy or regulations – e.g., “No personal data older than 2 years can be used due to GDPR”.

Moreover, mention how the model can be trained or updated: Will it be a one-time training, periodic retraining, or continuous learning? This has implications on infrastructure and monitoring later. Essentially, treat data as part of the requirements, not something ad-hoc.

Life Cycle-spanning Requirements

Ensure the requirements cover the entire life cycle of the AI system, not just the initial deployment. This means thinking ahead about maintenance, monitoring, and even retirement.

Example: require that “The system shall provide logging of key events and decisions for auditing” (to support monitoring and Control 6.2.8 event logs), or “The system should be designed so that models can be updated or replaced with minimal downtime” (to facilitate maintenance).

Also consider end-of-life: “If the AI is to be decommissioned, it shall be done in a manner that retains needed data for compliance and removes or archives models securely.”

Including such forward-looking criteria ensures from the start that the system won’t be a black box that’s hard to manage later.

Iterate and Update Requirements if Needed

Recognize that AI projects are exploratory – sometimes you discover new information or challenges that require revising requirements. The control expects that requirements “should be revisited” if the AI system as initially conceived cannot operate as intended or if new info arises.

For instance, during development you might find that achieving a certain accuracy is unfeasible without exponentially more data (making the project financially or technically impractical). In such cases, update the requirements (with proper approval) to reflect a more realistic target or a change in scope. This flexibility is fine as long as changes are documented and justified. It’s better to adjust and document a requirement (e.g., lowering a performance target or narrowing scope) than to proceed under false assumptions.

Each change should trigger a quick re-evaluation: does the new requirement still align with our objectives and risk appetite? If not, maybe the project’s viability is in question.

Maintain a Requirements Traceability Matrix

This is a useful tool – it links each requirement to design elements, development tasks, and eventually to testing and validation results. While not explicitly mandated, a traceability practice helps ensure every requirement is accounted for in the design and tested.

It’s particularly helpful for demonstrating compliance: you can show auditors or management how each ISO control objective (like fairness or safety) was concretely implemented and verified through specific requirements and tests.

With thoroughly specifying AI system requirements upfront, you create a reference blueprint for developers, testers, and stakeholders. This reduces ambiguity (everyone knows what to aim for) and helps catch feasibility issues early. It also ties the AI project to business needs and constraints (since you included business rationale and compliance considerations in the spec).

In summary, a well-documented requirement set is the cornerstone of successful and responsible AI system development – it guides the team and provides a baseline for all subsequent life cycle stages.

Skipping or skimping on this step can lead to missed expectations or ethical lapses later, so Control 6.2.2 encourages diligence at this formative phase.

Control 6.2.3 – A.6.2.3 / B.6.2.3

Documentation of AI System Design and Development

Control A.6.2.3 mandates the following:

Document the AI system design and development based on the organizational objectives, documented requirements, and specified criteria.

Explanation & Implementation Guidance

Once you have requirements and begin designing and building the AI system, Control 6.2.3 requires keeping thorough documentation of your design choices and development progress.

This is essential for transparency, knowledge transfer, troubleshooting, and demonstrating that you followed responsible practices. Here’s how to implement this control effectively:

Architectural Design Documentation

Create and maintain a document (or set of documents) that describes the overall architecture of the AI system. This should include diagrams and explanations of how the system is constructed. Key items to cover:

- AI model architecture: What type of AI technique is used (e.g., a supervised machine learning model vs unsupervised, neural network vs decision tree, etc.). Explain why that approach was chosen in light of the objectives (for example, “We chose a supervised learning approach using a neural network to maximize accuracy for image recognition, with careful regularization to avoid overfitting”).

- Components: List all hardware and software components in the system (this ties back to Resource documentation, Control 4.2). For instance, note if you’re using specific AI frameworks (TensorFlow/PyTorch), libraries, cloud services, specialized hardware (GPUs/TPUs), etc. Include how these components interact (data flow diagrams, etc.). This helps others understand the infrastructure and any constraints or dependencies.

- Interface and Inputs/Outputs: Document how the AI system interacts with other systems or users. Describe the inputs it consumes (data types, sources) and the outputs it produces, and any user interface or API. For example, “The AI model is exposed via a REST API that takes JSON input of user profile data and returns a risk score between 0-100.” Also, if applicable, detail how humans can interact – can they provide feedback, override decisions, or must they interpret outputs? This overlaps with controllability considerations (making sure the design accounts for human use).

Design Decisions and Rationale

For significant design choices, especially those impacting ethics or risk, write down the decision rationale. For example, if you decided to exclude certain features from the model to prevent bias (say you chose not to use gender as an input to an AI hiring tool to mitigate discrimination risk), document that decision and why it was made. Or if you opted for one algorithm over another (like a simpler model for explainability instead of a complex black-box model), note that. This provides a trail of how you balanced objectives and constraints. It’s valuable during reviews or if the system’s outcomes are later questioned – you can show the thought process that went into the design. It also helps future maintainers: they won’t unknowingly reverse a prior decision without understanding its context.

Data Design and Quality Considerations

Document what data will be used and how data quality is ensured in design. Include information about training datasets – sources, volumes, any preprocessing steps, and why those datasets are appropriate. If you assume certain statistical properties (e.g., “assumed data is normally distributed” or “class imbalance is addressed by oversampling class X”), note those. Also note how you have addressed data quality issues ( B.7 Data controls).

For example, “Outliers above a certain threshold are removed to improve model stability, as described in our data preprocessing plan.” This documentation not only helps reproducibility of model training, but also surfaces any data limitations which might affect the system’s performance or fairness.

Model Training and Tuning Records

Keep a log of model development iterations – which algorithms or hyperparameters you tried, and what results they yielded (evaluation metrics). Over the course of development, you might create dozens of model versions. Documenting this (even if briefly) prevents wasted effort repeating failed experiments and provides justification for the final model choice.

Include how the model was evaluated and refined: What evaluation metrics were used (accuracy, F1-score, AUROC, etc.) and why? How did the model perform on those metrics? What bias or robustness tests were done?

For instance, “Initial model had an accuracy of 85% but showed a significant bias against older users; we adjusted the training data distribution and added a fairness constraint which improved bias metrics (disparate impact ratio now within acceptable range) while maintaining 82% accuracy.” These kinds of details show that you actively worked to meet responsible AI objectives (like fairness) during development.

Security Considerations in Design

Document how you addressed security threats and controls in the AI system’s design. AI systems have some unique security issues – e.g. data poisoning (someone manipulating training data to corrupt the model), model inversion or stealing attacks (where an attacker probes the model to extract sensitive info or replicate it). Note which threats were considered relevant and what mitigation was built in.

For example, “We considered the risk of data poisoning; to mitigate, we implemented data validation checks and will retrain with controlled data only. We also restrict access to the model API to authorized services to reduce model extraction risk.” Include any standard security practices applied (like encryption of model files, secure coding standards followed, etc.).

This security documentation is often looked for by auditors or cybersecurity teams to ensure the AI system won’t become an entry point for attacks.

Iterative Design Updates

AI system design might go through multiple iterations (you try a model, then refine requirements or objectives, and redesign parts). Maintain versioned documentation for each major iteration or milestone.

You don’t necessarily need to preserve every minor tweak, but ensure that at the end, you have a final system design document that reflects what was actually built, and that it has been updated with changes.

This final design doc should align with the delivered system (and note any deviations from initial requirements and why). Version control systems or documentation tools can help track changes over time. The main point is not to lose history or context as the design evolves.

With implementing Control 6.2.3, you ensure that knowledge about the AI system is preserved and accessible. Good design and development documentation serves several purposes:

- It helps onboard new team members or partners by explaining how the system works.

- It supports transparency – both internally and for external oversight – by showing you have nothing to hide about the AI’s workings.

- It is invaluable for maintenance and troubleshooting. If an issue arises in production, maintainers can refer to the design docs to pinpoint potential causes (e.g., known limitations in the model).

- It provides evidence that you followed a robust process (which can be useful during ISO 42001 certification audits or stakeholder inquiries).

An AI system can be complex and might operate in critical areas; documenting its design thoroughly is akin to documenting the blueprint of a machine. Without it, you’d be flying blind when trying to understand or fix the AI’s behavior. Thus, Control 6.2.3’s emphasis on documentation directly supports accountability and continuous improvement of AI systems.

Control 6.2.4 – A.6.2.4 / B.6.2.4

AI System Verification and Validation

Control 6.2.4 focuses on establishing a rigorous testing and evaluation regimen for AI systems – both to verify the system meets requirements and to validate that it achieves its intended purpose responsibly.

Define and document verification and validation measures for the AI system and specify criteria for their use.

Explanation & Implementation Guidance

You need a plan for how you will test the AI system, what metrics or benchmarks it must meet, and how often you’ll re-evaluate it. Key practices include:

Define a Comprehensive Testing Strategy

Early in the project (typically during planning or design), define what kinds of tests the AI system will undergo and what tools or frameworks will be used for each. This strategy should cover:

- Functional testing: Does the AI produce the correct or expected outputs for given inputs? This includes unit tests for components (e.g., does a preprocessing function work correctly?) and system tests (e.g., feeding known test cases to see if the AI handles them as expected).

- Performance testing: Measure how well the AI meets quantitative targets – e.g., accuracy, error rates, throughput, response time. For each metric, set acceptable ranges or minimum thresholds that were derived from requirements. For instance, “The model must achieve at least 90% precision and recall on the test dataset” or “The system should handle 100 requests per second with <1s latency”. These become your release criteria – the AI shouldn’t go live unless they are met.

- Domain-specific validation: If the AI is used in a particular domain, ensure tests represent that domain. For example, if it’s a medical diagnosis AI, test on diverse patient data to validate it works across ages, ethnicities, etc. Select test data that reflects the real-world scenarios and edge cases the AI will face. If the AI’s intended domain of use is broad, your test set should be broad; if the domain is narrow, tests should deeply cover that niche.

- Adversarial and robustness testing: For AI, consider tests for robustness against bad inputs or adversarial cases (especially if the system is critical). E.g., test slight perturbations in input to see if a vision model is fooled (robustness), or attempt known adversarial examples if applicable. This relates to reliability and safety – you may define an acceptable error margin or tolerance for the system under such conditions (perhaps referencing ISO/IEC TR 24029-1 which deals with robustness of neural networks, for guidance on methods).

- User acceptance testing: If applicable, have end-users or subject matter experts validate the AI system in a staging environment. Their feedback might reveal if the AI’s outputs are interpretable and useful in practice. Include criteria such as “95% of pilot users are able to understand and utilize the AI’s recommendation correctly” if human-AI interaction is important.

Establish Evaluation Criteria for Responsible AI

Beyond basic performance, define how you will evaluate the AI system’s impact and ethics. This could involve:

- Risk and impact evaluation: Develop a plan to evaluate the AI system for risks to individuals, groups, or society. For example, perform an AI impact assessment (again linking to Control 5.2 on impact assessment process) focusing on potential harms or biases. The criteria here might be qualitative (checklist of ethical risk factors) or quantitative (e.g., acceptable bias metrics or fairness indices). The organization should document something like: “We will evaluate whether the AI’s decisions could potentially cause harm or discrimination; any identified risk above a defined threshold triggers a redesign or mitigation step.”

- Acceptable error rates and reliability: Determine what error rate is tolerable given the AI’s context. For instance, in a medical AI, maybe only <1% false negative rate is acceptable for critical conditions. Set these targets explicitly: “The system’s false positive rate in identifying fraud should not exceed X%”, etc. Also consider operational factors – under what conditions will the AI operate and how might those affect performance? Define acceptable ranges for those: e.g., “This vision AI is intended for well-lit environments; performance in low-light below 80% accuracy is acceptable only if usage in such conditions is <5% of cases.” If certain intended uses are high-stakes, you may impose stricter criteria for those scenarios (like requiring higher accuracy or additional safety checks when AI is used for, say, medical diagnoses vs. casual scenarios).

- Stakeholder interpretability: A critical validation element is checking that those who rely on the AI outputs can understand them sufficiently. The organization should decide how to evaluate this. For example, if the AI provides credit scores used by loan officers, do those officers find the scores and explanations useful and clear? You might set up an evaluation where a sample of target users interpret AI outputs and rate their clarity. The criteria could be: “All frontline decision-makers must be able to explain the AI’s suggestion to a customer in simple terms,” or “No AI recommendation will be deployed unless users can correctly identify the rationale from provided explanations at least 90% of the time.” Document methods or metrics for this interpretability test (it might be qualitative surveys or specific interpretability scores).

- Frequency of re-evaluation: Determine how often the AI system will be re-tested or audited once operational. For instance, “This AI system will undergo a full evaluation every 6 months or with any significant change (new model version or major data update)”. Frequency might depend on risk: higher-risk systems require more frequent checks. Document this plan so it’s clear that verification and validation isn’t a one-time event but ongoing.

Evaluate Against Criteria and Document Results

When you run the verification and validation procedures, compare the outcomes against your pre-set criteria.

Keep a record of the evaluation results. If the AI system meets or exceeds all criteria, it can be approved for release (this should be formally recorded, e.g., in a test summary report signed off by QA or compliance).

If the system fails to meet any criteria, especially those tied to responsible AI objectives (like fairness or safety thresholds), the organization has a decision to make:

- Ideally, address the deficiencies and improve the system (e.g., retrain the model with more data, adjust the algorithm, add a mitigation for the identified issue) and then re-test.

- If certain shortcomings cannot be fully resolved (maybe due to technical limitations or trade-offs), you should reconsider the intended use or scope of the AI system.

For example, if an AI cannot achieve the required accuracy for a high-stakes use, perhaps restrict its use to advisory roles rather than fully automated decisions, or use it only in low-risk scenarios. Document any such decisions and how you will compensate (like adding extra human review steps if error rate is higher than desired). In extreme cases, if an AI system poses unacceptable risks that cannot be mitigated, the organization should be prepared to not deploy it at all until those issues are resolved. - Particularly focus on failures to meet ethical or compliance objectives – e.g., if bias tests show the AI is still unfair, or if safety tests indicate potential harm. ISO 42001 expects that if the system cannot meet the responsible AI development and use objectives (like those defined in Control 6.1.2 or the objectives for AI use in Control 9.3) you will manage or avoid those deficiencies. That might mean more development work, adding safeguards, or narrowing the system’s deployment as mentioned. Any decision here should involve relevant leadership and, if needed, stakeholder communication (especially if a promised AI feature has to be delayed or altered due to these concerns).

Document Verification & Validation Process and Criteria

As part of compliance, have a Verification and Validation (V&V) plan document. This typically outlines all the above – what will be tested, how, by whom, and what the pass/fail criteria are. Also document the final evaluation report. In regulated environments, this might be needed for audits or regulatory submissions. It’s also useful internally to learn from one project to the next (e.g., if you found certain tests particularly valuable or discovered new failure modes to test for next time).

Robust verification and validation, as enforced by Control 6.2.4, ensure that you don’t just trust an AI system blindly – you thoroughly probe it before and during operation to confirm it’s fit for purpose.

This process gives confidence to your organization and stakeholders that the AI is working correctly and ethically. It also creates a feedback loop: the insights from validation (like where the AI struggled) can inform improvements in the next development cycle or even updates to your objectives.

Treating AI validation with the same rigor as, say, testing a new aircraft or medical device (appropriately scaled to the risk) is how you prevent costly failures and uphold trust in AI systems.

Control 6.2.5 – A.6.2.5 / B.6.2.5

AI System Deployment

Control 6.2.5 emphasizes planning this transition carefully and making sure all prerequisites are satisfied before the AI goes live.

Document a deployment plan and ensure that appropriate requirements are met prior to deployment.

Explanation & Implementation Guidance

After development and testing, deploying an AI system into its production or live environment is a critical step. Key aspects of a good AI deployment practice include:

Create a Formal Deployment Plan

Develop a deployment plan document for each AI system release. This plan should outline when, where, and how the AI solution will be deployed. Include specifics like:

Deployment environment

Describe the target environment (e.g., on-premises data center, specific cloud platform, embedded device, etc.). If different from the development environment, note the differences and any adjustments needed (for example, “Model developed on local servers will be deployed on AWS SageMaker – ensure compatible runtime and libraries are used”).

Deployment steps

List the sequence of steps to deploy, such as provisioning hardware, installing software components, loading the AI model, configuring system settings, and connecting to data sources. Essentially, this is like a playbook to go from “tested in staging” to “running in production.”

Roles and responsibilities

Identify who is in charge of each step (DevOps engineer, data scientist, IT admin, etc.), and who oversees/approves the go-live.

Backout plan

Very important – plan what happens if something goes wrong or if the decision is made to abort the deployment. This could include steps to roll back to a previous version or to disable the AI component safely. For instance, “If deployment fails or metrics degrade by >X%, switch back to previous model version within 1 hour”. Having a rollback strategy minimizes risk of prolonged outages or faulty AI behavior in production.

Account for Environment Differences

AI systems are sometimes developed in one setting and deployed in another. Ensure your plan addresses any infrastructure or context changes. For example:

- If you trained a model on premise but will deploy it in a cloud service, ensure compatibility and that cloud security controls are in place (like proper access keys, network settings, etc.).

- If the model will run on an edge device with lower resources, plan for optimizing the model (like compressing it or using a smaller version).

- Consider data pipeline differences – maybe in development you used static test data, but in deployment it will pull streaming real-time data. The plan should include setting up those live data integrations and testing them.

- Also consider deployment segmentation: sometimes AI system components are deployed separately. E.g., a central server hosts the model, but a client application on user devices queries it. If components are separated (like a model file and a software application), ensure the plan covers deploying each component in the correct order and making sure they communicate properly once live.

Pre-Deployment Checklist (Release Criteria)

Before giving the green light, all pre-deployment requirements must be met – essentially a final go-live checklist. This typically includes:

- Verification & validation passed: Confirm that all tests and validations (from Control 6.2.4) have been successfully completed and any issues resolved. The deployment plan should state that no deployment occurs unless the model met its acceptance criteria and was approved by the relevant authority (e.g., AI governance committee or product owner).

- Approvals obtained: Ensure that all necessary management or oversight approvals are signed off. For high-risk AI, you might require executive sign-off or even external expert review sign-off. The plan should list who must approve (names or roles) and record when they did.

- User training or documentation ready: If the AI system requires end-user training or new user guides (especially if it changes workflows), verify that these materials have been prepared and delivered. For example, “Customer support staff have been trained on the new AI-assisted ticket triage system prior to go-live”.

- Monitoring setup: It’s wise to set up monitoring tools (see also Control 6.2.6 on operation & monitoring) before deployment or as part of deployment, so that as soon as the AI is live, you can track its performance and detect anomalies. The plan can include steps like “Implement monitoring dashboards, set up alerting for key metrics (e.g., error rate, response time, model confidence below threshold)”.

- Fall-back measures: Ensure any necessary fall-backs are in place at go-live. For instance, if the AI output will feed into an automated process, you might initially run it in “shadow mode” (where it makes predictions but a human still makes the final decision) to validate real-world performance. Or have a rule that if the AI output is uncertain or fails, the system falls back to a default safe action or human decision. Planning these beforehand prevents panic if something doesn’t work perfectly on day one.

Stakeholder Communication and Involvement

The deployment plan should consider notifying and involving relevant stakeholders about the AI system going live. From a governance perspective, this could mean:

- Informing business owners or process owners that an AI component will be active (so they can be extra attentive to results initially).

- If the AI impacts external users or customers, consider whether any disclosure or communication is appropriate (for transparency, you might need to tell users an AI is involved – which relates to Annex A.8 controls on information for users). The deployment plan could tie into a communication plan or PR if it’s a big change.

- Incorporate the perspectives of those stakeholders into the deployment schedule. For example, deploy at a time that minimizes impact (maybe after business hours or in a slow period) to allow a cushion for fixing issues.

- Ensure support teams are on standby during and after deployment, in case users or systems encounter problems (this overlaps with support plans in Control 6.2.6).

Pilot or Phased Deployment (if applicable)

For complex or high-risk AI systems, a best practice is to deploy in phases rather than all at once. This might include:

- A pilot deployment to a small user group or a subset of data, monitoring results closely before wider rollout.

- A parallel run, where the AI runs alongside the existing system to compare outcomes without actually affecting decisions initially.

- Gradual scaling (e.g., enabling the AI for 10% of cases, then 50%, then 100% as confidence grows).

- If you choose such an approach, document it in the plan: “Deployment will proceed in three phases: pilot with department X for 2 weeks, then extend to half the company’s users, then full deployment. Criteria for moving to each next phase: e.g., no major issues found and key metrics stable or improved.” This method helps catch any surprises in a controlled way.

A well-managed deployment per Control 6.2.5 means no loose ends are left: the system enters production only when it’s truly ready and everyone (systems and people) around it are prepared. This minimizes disruption and ensures the AI starts delivering value safely and as intended from day one. Skipping proper deployment planning can lead to scenarios where an AI system malfunctions in production or causes confusion among staff – outcomes that can erode trust in the AI and the project. Thus, treating deployment with diligence protects both the AI system’s integrity and the interests of all parties affected.

Control 6.2.6 – A.6.2.6 / B.6.2.6

AI System Operation and Monitoring

Control 6.2.6 requires you to plan how you will keep the AI system running reliably and responsibly in production.

Define and document the necessary elements for the ongoing operation of the AI system. At a minimum this should include system and performance monitoring, repairs, updates, and support.

Explanation & Implementation Guidance

After an AI system is deployed, the work isn’t over – it enters the operation phase, where it needs to be monitored, maintained, and supported just like any other critical system (perhaps even more so, given AI’s tendency to evolve or degrade over time).

Key elements to address include:

System & Performance Monitoring

Establish processes and tools to continuously monitor the AI system’s performance and health. This includes:

- Technical uptime and error monitoring: Ensure the AI service or model is running without failures. Use logging and alerting (tie with Control 6.2.8 on logging events) to catch errors or crashes. For example, set up alerts if the AI service goes down or if it outputs an abnormal result (like a prediction of “NULL” or outside expected range).

- Performance metrics tracking: Track the AI’s key performance indicators in real-time or through regular reports. These may include accuracy metrics (if ground truth or feedback is available over time), success rates, or business KPIs (e.g., conversion rate if it’s a recommendation engine). Compare current performance to the expected baseline established during validation. If you see degradation – e.g., model accuracy dropping significantly on new data – that’s a sign the model might need attention (data drift, concept drift, etc.).

- Monitoring for expected behavior: Monitor whether the AI is operating within its intended parameters. For example, if the AI is only supposed to be used on a certain population or data type, make sure it’s not inadvertently being applied outside that scope. If your monitoring of inputs shows data coming in that’s outside what the model was trained on, that’s a red flag that the situation changed.

- Criteria related to commitments and stakeholder needs: If you have SLAs or internal expectations (like response time commitments to users or accuracy commitments to a regulator), monitor those continuously. Also keep an eye on user satisfaction or complaints, as those can indicate issues not caught by technical metrics (e.g., users might find an AI suggestion inappropriate even if technically it’s within spec). Essentially, monitoring isn’t just technical; incorporate user-centric and stakeholder-centric metrics too, such as customer feedback or compliance audit results.

Continuous Learning and Drift Management

Some AI systems have the ability to learn on the fly or update themselves with new data (online learning or periodic retraining). If yours does:

- Put safeguards in place to monitor the evolving model. Just because the model updates itself doesn’t mean it’s always improving – it could drift in a bad direction (for example, a model retrained on recent biased data could become biased). Establish triggers to evaluate the model after each learning cycle or at intervals (like re-run a validation suite or bias check after every retraining).

- Ensure that any continuous learning is controlled. It might be wise to have a “staging” or shadow model that learns continuously, but only deploy the updated model to production after review. If that’s not feasible and the model updates in production, at least have thresholds that if performance deviates beyond them, the model update is rolled back or flagged for human review.

- Even for static models, concept drift (the world changing so that the model’s predictions worsen) is a risk. For example, a model predicting consumer behavior might degrade if user preferences shift significantly over time. To handle this, analyze the data coming in periodically: does it look statistically different from the training set? If yes, plan for model retraining or adjustment. Set a schedule or criteria: e.g., “If accuracy on the last month’s data (measured via ground truth or proxy) falls below 90%, initiate a model retraining with more recent data.” ISO/IEC 23053 provides guidance on detecting concept drift – you might employ techniques from there. The key is not to assume your model will be as good next year as it was at deployment; proactively watch for drops in performance that indicate the need for maintenance.

Incident Response, Repairs, and Updates

Develop procedures for handling errors, failures, or any incidents involving the AI system. This is akin to an IT incident response but tailored for AI-specific issues too:

- Error handling: Define what constitutes an AI system “failure.” It could be technical (system outage) or functional (AI made a critical wrong decision). For different categories, have an action plan. For technical issues, standard IT support kicks in (restart service, fix bug, etc.). For functional issues (like discovering a harmful bias in decisions), convene the appropriate team (data scientists, ethics officer, etc.) to diagnose and correct the model or process.

- Repair process: Have a clear chain of command and process for investigating AI issues. For example, if the AI is producing unexpected outputs, who will analyze it (AI developers)? Who decides on pulling it from production if needed (product owner or risk manager)? Set these roles in advance so you’re not scrambling during a crisis. Document known potential failure modes and remediation steps for each if possible.

- Updates and Patch Management: Over time, you will apply updates – whether it’s improving the model, fixing a bug, or adjusting for new regulations. Create a controlled update process (similar to deployment): include testing updates in a non-production environment, getting necessary approvals, communicating changes to users if it affects them, and scheduling updates at appropriate times. Maintain a log of changes (version history) including what changed and why (this overlaps with technical documentation in Control 6.2.7).

- Some updates might be urgent (e.g., a critical issue or vulnerability found). Plan for emergency fixes: who can approve a hotfix, how quickly can it be rolled out, and how to validate it quickly. Ensure even urgent changes get documented after the fact.

Operational Changes and Re-purposing

Sometimes, after deployment, the use of the AI system might expand or users might start using it in unanticipated ways. For example, a chatbot originally meant for FAQs might start being used for customer complaints. The control suggests considering whether the AI is being used as intended and if not, evaluate appropriateness. If new uses emerge, formally assess them: Does the AI perform adequately for that scenario? Are there new risks? If an AI system is being repurposed or significantly changed in operation, treat it almost like a new project – revisit requirements, risk assessment, etc., and adjust controls accordingly. Your operational procedures should require evaluation and approval before an AI system is deployed in a novel context. In some cases, you might decide an AI is not appropriate for a certain use and disallow it, or you might need to add safeguards for that context. Keep communication open with users so they understand the intended use (and perhaps include warnings or usage guidelines in user documentation to prevent misuse – linking to Control 8.5: Information for Interested Parties if needed).

Support and Incident Reporting

Determine how ongoing support will be provided for the AI system:

- Internal support: If internal teams (employees) use the AI, set up a support channel for them to report issues or ask questions. For instance, an AI tool used by staff might confuse them at times – ensure they know how to get help (maybe an internal wiki or direct line to the AI development team or helpdesk).

- External support: If customers or external users interact with the AI (like a chatbot or AI feature in a product), have a way for them to get support too. This could be integrated into existing customer support. Make sure support teams are trained to handle AI-related queries. They should know how the AI works generally, its limitations, and how to escalate problems to technical teams when needed.

- Incident logging and SLA: Define how incidents related to the AI (errors, service downtime, or even cases of AI giving problematic outputs) are logged and tracked. If you have service level agreements (uptime, response times), monitor those and report incidents per normal ITIL practices. You might also have ethical SLAs internally, like no validated instances of AI causing legal compliance issues; if it does, treat as severity-1 incident.

- Metrics for support: Track support tickets or incidents related to the AI. A spike in questions or complaints could indicate a problem (maybe the AI is confusing users or making mistakes). Use these metrics to decide if retraining or redesign is needed. Also consider a service level for support – e.g., if the AI is critical, ensure 24/7 on-call support is available to fix outages quickly.

Security Monitoring

Specific to AI, ensure you’re monitoring for AI-specific security threats as well:

- Monitor data inputs for signs of poisoning attempts (e.g., someone might input malicious data hoping to skew the model if it learns online).

- Watch access logs for unusual activity that might indicate someone trying to steal the model or probe it extensively (lots of unusual queries could indicate a model extraction attack attempt).

- If the AI uses sensitive data, continuously ensure compliance with data security measures (access controls, encryption etc., linking to your InfoSec controls).

- Basically incorporate your AI system into your information security monitoring regime. For instance, extend SIEM (Security Information and Event Management) rules to cover AI components, and train incident responders on scenarios like “model output was manipulated” or “suspicious spikes in certain output patterns that could indicate adversarial input.”

Periodic Performance Reviews

Over longer periods, conduct formal reviews of the AI system’s overall performance and impact. For example, quarterly or annually, do an audit: Is the AI still meeting its goals? How accurate is it now? Any new ethical concerns? In these reviews, involve stakeholders (business, technical, compliance) to decide if any updates to objectives or processes are needed. This ties in with ISO 42001’s continual improvement emphasis (clauses 9 and 10).

The goal is to prevent situations where an AI is deployed and then “forgotten,” which can lead to unchecked drift, degraded performance, unnoticed biases creeping in, or security vulnerabilities. Instead, the AI system should be managed like a “living” system – continuously watched and cared for.

Good operation and monitoring practices allow your organization to trust but verify the AI’s ongoing behavior, maximizing its benefits while promptly catching and addressing any downsides.

Control 6.2.7 – A.6.2.7 / B.6.2.7

AI System Technical Documentation

Control 6.2.7 is about identifying what information different audiences might need about the AI system and making sure that documentation is prepared and shared appropriately.

Determine what AI system technical documentation is needed for each relevant category of interested parties (such as users, partners, or regulators), and provide the technical documentation to them in the appropriate form.

Explanation & Implementation Guidance

Technical documentation for AI systems serves as a user manual, transparency report, and reference guide for various stakeholders. Essentially, it’s about being transparent and informative regarding your AI. Here’s how to tackle it:

Identify Stakeholder Documentation Needs

Different stakeholders will require different levels of technical detail:

- End Users / Operators: Need documentation on how to use the AI system safely and effectively. This might include a user guide with instructions, limitations, and how to interpret outputs. For example, “After you upload a document, the AI will highlight sections it finds relevant. Verify these suggestions, as the AI might miss context. If a section is highlighted incorrectly, you can mark it as a false positive to improve the system.” Include any controls the user has (like turning the AI on/off, adjusting sensitivity, or providing feedback). Essentially, for users, focus on practical usage information and cautions. If the AI is part of a product, integrate this into the product manual or help center.

- Internal Technical Teams / Engineers: Need detailed technical docs to maintain or update the system. This would include system architecture docs, API specifications, data schemas, configuration settings, etc. They should have access to the full design and development documentation (from Control 6.2.3) – possibly an internal technical report or wiki that covers the model internals, training approach, feature definitions, etc. Also include standard operating procedures for them (some of which overlap with operations from 6.2.6): e.g., how to retrain the model, how to deploy a new version, how to handle common error scenarios. Keep these updated with each iteration.

- Partners/Clients (if providing AI system externally): If you deliver an AI system to customers or integrate with partner systems, you might need to provide technical specs to them. This could be API documentation, integration guidelines, data format requirements, and possibly a description of the model’s intended use and performance. For instance, a client integrating your AI via API would need to know input/output formats, rate limits, expected error codes, etc. If partners require it, share information about the model’s limitations too (for example, “This model was trained on US English text and may not perform well on other dialects” – this manages expectations and helps partners use the AI appropriately).

- Regulators or Auditors: In certain industries (finance, healthcare, etc.), regulators may require documentation to ensure compliance. This could include explanations of how the AI makes decisions (algorithmic transparency), what data it uses, its accuracy rates, bias mitigation steps taken, results of validation, and ongoing monitoring plans. Essentially, a comprehensive technical dossier that demonstrates you have control over the AI and it meets relevant standards. If, for example, you’re in EU with high-risk AI under the AI Act, you’ll need a technical documentation file containing much of what ISO 42001 already has you compile (data description, design, risk management, etc.). Be prepared to furnish such documentation when requested by oversight bodies.

- Supervisory Authorities or External Assessors: Similar to regulators, if you are seeking certification (like ISO 42001 certification, or perhaps an external ethics review), those assessors will need technical info. Tailor a documentation pack that covers how you meet each control/objective – essentially the evidence. This might include many documents (policies, design docs, test reports). Ensure they’re well-organized and accessible.

Elements to Include in Technical Documentation

The standard gives examples of what might be included. Generally, ensure the following elements (as applicable) are documented and available:

- General system description & purpose: A high-level overview of what the AI system is and is meant to do (good for all audiences, but written in varying detail). For a non-technical audience, a plain language description of the AI’s purpose and benefits is useful.

- Architecture and design specifics: Provide system architecture diagrams, information flow charts, and a description of components. (You likely produced this for Control 6.2.3 – now it’s about packaging it for others). Include specifics on the model type, algorithm, and how it was developed. If there are multiple components (like a pipeline of multiple models or preprocessing steps), explain each.

- Technical assumptions and limitations: Clearly state any assumptions the system makes (e.g., “assuming input data is in English; accuracy may drop for other languages”). List known technical limitations: acceptable error rates (as determined in validation), conditions where the system is less reliable (like the vision AI example – “performance degrades in low-light images”), and any constraints (e.g., requires internet connectivity, requires at least 8GB RAM, etc.). Also mention if the system has guardrails – like “the chatbot will not answer medical questions” if that’s intentionally constrained. Users and auditors appreciate when you’re upfront about what the AI can and cannot do.

- Monitoring and control features: Describe what mechanisms exist for monitoring the system’s operation and if users/operators can intervene. For example, “The system interface allows an operator to override the AI decision if needed”, or “The model outputs a confidence score; decisions below 60% confidence automatically get flagged for human review”. If there are built-in fail-safes or shutdown procedures (like an ability to quickly disable the AI component), document those too.

- Life cycle documentation: Essentially an encapsulation of the outputs from controls 6.2.2 to 6.2.6:

- Requirements and design criteria (could be summarized for external docs).

- Design choices and quality measures: e.g., “We chose a random forest model to enhance interpretability, and we performed bias mitigation by reweighing training samples.” This shows you took quality seriously.

- Data information: what data was used for training, its provenance (source and any cleansing done). Possibly include data schemas or sample data if helpful.

- Verification and validation records: summarize the testing results or attach a test report. For a regulator, you might provide the full validation report with metrics and sign-offs.

- Risk management and impact assessment summary: e.g., “We conducted an AI impact assessment (reference document X) and identified low risk for discrimination due to implemented bias controls. Ongoing monitoring is in place.” If an impact assessment document exists (from B.5 controls), it can be referenced or included.

- Changes and updates: maintain a documented change log of the AI system. For instance, Version history listing dates of new model versions or major changes, with reasons (bug fix, improved accuracy, etc.). This is crucial for accountability – if someone later asks “why did performance drop last March?”, you can correlate to “we updated the model on March 1 with new data”. Each update entry should note if re-validation was done and any new limitations introduced or resolved.

- Failure and incident handling procedures: Document what you have planned for failures (this overlaps with operation planning). For technical documentation shared with users or clients, you might include “what to do if the system is not functioning normally” (e.g., contact support, or steps to restart if it’s an on-prem solution). For internal docs, detail the rollback plans or incident response as prepared.

- Roles and responsibilities: Especially for internal documentation or regulators, list the roles responsible for the AI system (who maintains it, who to contact if issues, who approves changes). This ties into governance – showing that accountability is assigned (e.g., AI Product Owner: Jane Doe; AI Model Trainer: Data Science Team A; AI Compliance Officer: John Smith).

- Standard Operating Procedures (SOPs): Provide any SOPs related to the AI (like procedures for monitoring logs daily, procedure for periodic retraining, procedure for reviewing ethical implications annually, etc.). Regulators or auditors love to see that you have concrete procedures, not just ad-hoc management. If your AI is critical, having an SOP for investigating incidents or updating the model will give confidence that operations are robust.

- User documentation considerations: If the technical documentation will be provided to end-users or customers, ensure it follows any relevant guidelines for clarity and completeness. In practice, just make sure that when a user reads it, they understand how to use the AI safely and whom to contact with concerns. Avoid overly technical jargon for general users; instead, possibly include an FAQ or simple explanations. This aligns with Control 8.2: System documentation and information for users, which essentially says users should have the info needed to understand and trust the AI. (For more on what to communicate to users, you might refer to that control’s guidance once available).

Format and Accessibility

Provide the documentation in an appropriate form for the audience:

- Internally, this might be a wiki or shared repository where technical staff can access up-to-date docs.

- For end users, maybe a PDF manual, online help pages, or in-app guidance tooltips – whatever format they normally get documentation in.

- For regulators, likely a formal document (PDF/Word) structured to address compliance points.

- Make sure documents are version-controlled and labeled with dates or version numbers, so everyone knows if they have the latest info. Also, consider if translations are needed (e.g., user docs in local languages if deploying globally).

- Ensure sensitive details are appropriately handled: e.g., you might not share full source code or detailed model weights with clients unless necessary, but you document everything internally. For regulators, you might need to reveal more under confidentiality. Determine what level of detail is appropriate and do not expose proprietary info in general user docs that could aid malicious actors (balance transparency with security).

Maintain and Update Documentation

Treat documentation as a living part of the system. Whenever the AI system changes (new version, new feature, change in usage), update the relevant docs. Also, periodically review documentation for accuracy – sometimes the system evolves and documentation lags, which can be risky if users rely on outdated instructions or if auditors find mismatches. Integrate doc updates into your change management process: no change is complete until documentation is updated. Have management approve documentation as needed (ensuring it meets quality standards and doesn’t leak anything sensitive inappropriately).

Good technical documentation builds trust: users feel informed about how the AI works and how to use it, partners can integrate smoothly, and regulators see that you are not operating a black box but have robust understanding and control of your AI.

Thorough documentation is like an insurance policy for the long term – if key personnel leave, the knowledge is not lost; if an incident happens, you have references to diagnose issues. It’s an often under-estimated part of AI governance, but it’s critical for scaling AI usage responsibly.

Control 6.2.8 – A.6.2.8 / B.6.2.8

AI System Recording of Event Logs

Control 6.2.8 requires you to implement event logging for your AI system, especially during operation, so that you have a detailed record of what the system has been doing.

Determine at which phases of the AI system life cycle record-keeping of event logs should be enabled, but at a minimum when the AI system is in use.

Explanation & Implementation Guidance

Logging is the backbone of accountability and troubleshooting. This is crucial for tracing decisions, debugging issues, and demonstrating compliance. Here’s how to implement effective logging for AI systems:

Decide What to Log and When

First, identify the key events and data points that should be logged. At a minimum, when the AI system is live and making decisions or predictions, logging must be on. Consider logging events in other phases too, such as:

- Training phase: If training is done regularly (online or periodic), log training events (when training started/ended, data used, version of model produced, performance metrics achieved). This helps track model evolution and reproducibility.

- Validation/Testing phase: If you run extensive tests, logging outcomes might be useful for later review (though these might be captured in reports anyway).

- Deployment events: Log when a new model or version is deployed, who deployed it, and any immediate results (e.g., initial accuracy on a baseline test in prod).

- Operational phase (mandatory): For each instance of the AI being used (e.g., each time an AI processes an input or makes a decision), log relevant details.

Key Information to Log During Operation

Ensure the logs capture:

- Timestamp: Every log entry should have a date/time. This is basic but critical for correlating events and analyzing sequences.

- Identification of the event/use case: For example, an unique request or transaction ID if applicable, or context of the decision. If an AI processes individual items (like loan applications), and you have an ID for each item, log that ID with the AI’s output for traceability.

- Input data references: It may not be feasible or desirable (due to privacy) to log full input data, especially if it’s large or sensitive (like images or personal text). However, log at least some reference to it – e.g., “AI received input image file X” or “Processed user ID 123’s data”. In some cases, storing a hash or an index to the data can allow later retrieval if needed, without storing the raw data in the log.

- AI output/results: Log what the AI decided or predicted. For example, “Predicted class: Fraud, Confidence: 87%”, or “Recommended action: Approve Loan”. If the output is numerical or categorical, log that. If it’s a complex structure, consider logging a summary or key result indicators.

- Out-of-bound or exceptional conditions: Specifically watch for and log any time the AI system’s output or behavior is outside the intended operating conditions. For instance:

- If the AI flags that an input is out of its knowledge scope (some systems might have novelty detection), log that occurrence.

- If the output confidence is very low or the model couldn’t produce an output, log that.

- If a result was overridden by a human or a rule (like a human corrected the AI or a business rule trumped it), log the override.

- Essentially, capture those moments where the AI’s operation deviates from the ideal path, as they often signify important events.

- System performance info (optional): You might also log timing (how long it took to process) or resource usage if relevant, especially for performance monitoring.

- Decisions affecting individuals: If the AI’s decision can impact a person (like denying a service), logging is doubly important. Some regulations might even require keeping logs for accountability (e.g., GDPR’s record-keeping and rights to explanation in some interpretations). Make sure you log enough to later explain the decision if needed (e.g., features important to the decision or the rule fired). But be mindful of privacy – ensure that sensitive personal data in logs is protected according to your data retention policies (e.g., encrypted logs, access control).

Retention and Security of Logs

Determine how long to keep the logs. The standard says logs should be kept as long as required for intended use and per org policy, and note that legal requirements may apply.

- For example, if the AI is involved in financial transactions, you might need to keep logs for X years for audit/regulatory purposes. If it’s a low-risk internal tool, you might not need logs beyond a few weeks or months.

- Align this with your overall data retention policy: avoid keeping logs longer than allowed if they contain personal data (to comply with privacy laws). At the same time, don’t delete them too soon if they might be needed for investigating issues or fulfilling oversight duties.

- Implement access controls on logs because they might contain sensitive information or give insight into your AI logic. Only authorized personnel should retrieve and analyze logs.

- If logs are extensive, set up log management systems (like SIEM or log analytics tools) to store and query them efficiently. Ensure backups or redundancy as needed because losing logs might mean losing traceability for a period.

Utilize Logs for Traceability and Monitoring

The purpose of logging is not just to store data, but to use it for:

- Traceability: If a question arises, say, “Why did the AI make decision X on date Y for case Z?”, your logs should allow you to reconstruct the event. Ideally, you can find the log entry for that case Z on date Y and see the AI’s output and any related info (maybe even version of the model if you log that on each call). This traceability is crucial for debugging, for explaining to affected users or auditors, and for learning to improve the system.

- Detection of anomalies: Set up automated monitoring on the logs to detect unusual patterns. For example, if normally only 1% of outputs are low confidence but suddenly 10% are, that might indicate a problem (maybe a change in input data distribution). Or if the AI starts outputting the same result repeatedly (maybe stuck in a certain state), logs will show that. Monitoring tools can trigger alerts on such patterns so you can address issues proactively.

- Accountability and incident analysis: If there’s an incident (say, the AI made a serious mistake or went down), logs are your forensic evidence. They’ll help you pinpoint what happened leading up to the incident. Without logs, you’re guessing.

- Compliance evidence: In regulated contexts, you might need to demonstrate that you are controlling the AI. Logs can show that you have been reviewing performance, that you can trace decisions, and that you kept records for the required time. For instance, some jurisdictions might require logging for certain AI (like biometric ID systems) – make sure to meet those specific requirements if they apply (e.g., log who accessed the system, search queries, results, etc., and keep for mandated period).

Phase-specific Logging

The control mentions determining at which phases logging should be enabled. While operation is the minimum, consider if logging during other phases is needed for traceability:

- During development: It could be useful to log decisions made in training (like which features were selected, etc.), though this is often captured in documentation rather than continuous logs.

- During testing/validation: If you run automated test suites, logs of test results can be kept, but usually a summarized report suffices.

- During maintenance updates: Log when models are updated as part of maintenance (we covered this in documentation but also have an operational log entry like “Model v2.3 deployed on 2026-09-01 by J. Doe” in an audit log).

- Essentially, logging is most critical when the AI is actively making decisions in the wild. Use common sense for other phases – if it adds value to record an event, do so.

- Special Cases and Jurisdictional Requirements: Be aware if the AI system is subject to laws that require certain logging. For example, biometric AI systems in some jurisdictions must log every time they’re used and who was identified, etc. (This was hinted in the provided text, that some AI like biometric ID have additional logging requirements). If you operate such systems, research those laws and ensure your logging meets them. Similarly, if dealing with personal data, ensure log retention is consistent with data protection laws (don’t keep personal info longer than allowed unless an exception applies).

Implementing Control 6.2.8 effectively means that nothing the AI system does goes unaccounted for. You have a record – which is the foundation of accountability. It provides confidence to management, regulators, and customers that if something goes wrong, you’ll know about it and be able to analyze it. Moreover, it helps in continual improvement: the data in logs can feed into analytics to improve future versions of the AI or adjust processes. Logging might seem technical, but it’s fundamentally about trust: it’s hard to trust a system that leaves no trace. With robust logging, you shine light on the AI’s operations and make it auditable and transparent (at least internally), which is exactly what responsible AI management demands.

Concluding AI System Life Cycle (A.6/B.6)

The AI system life cycle controls (A.6/B.6) in ISO 42001 provide a comprehensive roadmap to govern AI projects from cradle to grave.