AI Policy Template: ISO/IEC 42001 Clause 5.2 & A.2.2/B.2.2

Build a strong foundation for your AI management system with an editable AI Policy Template designed to support ISO/IEC 42001 implementation.

This template helps you move beyond a basic AI acceptable-use policy by providing a structured, top-level governance document for organizations that develop, deploy, procure, or use AI. It covers the policy areas most teams need to formalize responsible AI governance, including leadership commitments, lifecycle governance, risk and impact assessment, data governance, human oversight, transparency, third-party controls, acceptable and prohibited use, and ongoing review.

Drafting notes are built in throughout, making it easier to adapt the document to your scope, roles, risk profile, legal environment, and AI operating model without starting from a blank page.

What is an AI policy template

A practical AI policy template is the organization’s governing AI charter in draftable form.

It gives the business a repeatable way to state what AI activity is in scope, what commitments leadership is making, who is accountable, how risk and impact are handled, and how the organization will monitor and improve its AI management system over time. Because ISO/IEC 42001 is a management-system standard, the template is less about model architecture and more about organizational intent, responsibilities, documented information, and governance discipline.

Why the ISO/IEC 42001 AI Policy Is Important

The AI Policy is a foundational governance document and a mandatory requirement under ISO/IEC 42001 Clause 5.2. It helps an organization explain how AI should be used, who is accountable, what risks must be managed, and what controls are expected.

Without a formal AI Policy, organizations may face challenges such as:

- Unapproved use of public AI tools.

- Sensitive data being entered into AI systems without authorization.

- Lack of accountability for AI-generated outputs.

- Unclear ownership of AI systems.

- Insufficient human oversight.

- Inconsistent risk assessment practices.

- Vendor AI risks going unmanaged.

- Difficulty demonstrating compliance or responsible AI practices.

- Limited visibility over AI systems used across the business.

- Increased exposure to privacy, security, legal, ethical, and reputational risks.

This template helps address these challenges by giving the organization a clear starting point for AI governance.

Who Should Use This Template?

This template is suitable for a wide range of organizations that use, develop, procure, or manage AI systems.

It is especially useful for:

- Organizations implementing ISO/IEC 42001.

- Organizations preparing for AI management system certification.

- Companies developing internal AI governance frameworks.

- Businesses using generative AI tools such as chatbots, writing assistants, coding assistants, analytics tools, or automation platforms.

- Organizations procuring third-party AI software or cloud-based AI services.

- Startups building AI-enabled products or services.

- SMEs that need a practical AI governance policy.

- Enterprises formalizing AI oversight across multiple business functions.

- Governance, risk, and compliance professionals.

- Information security and privacy teams.

- AI governance officers and responsible AI leads.

- Internal auditors reviewing AI management controls.

- Legal and compliance teams managing AI-related obligations.

What’s Included in the Template

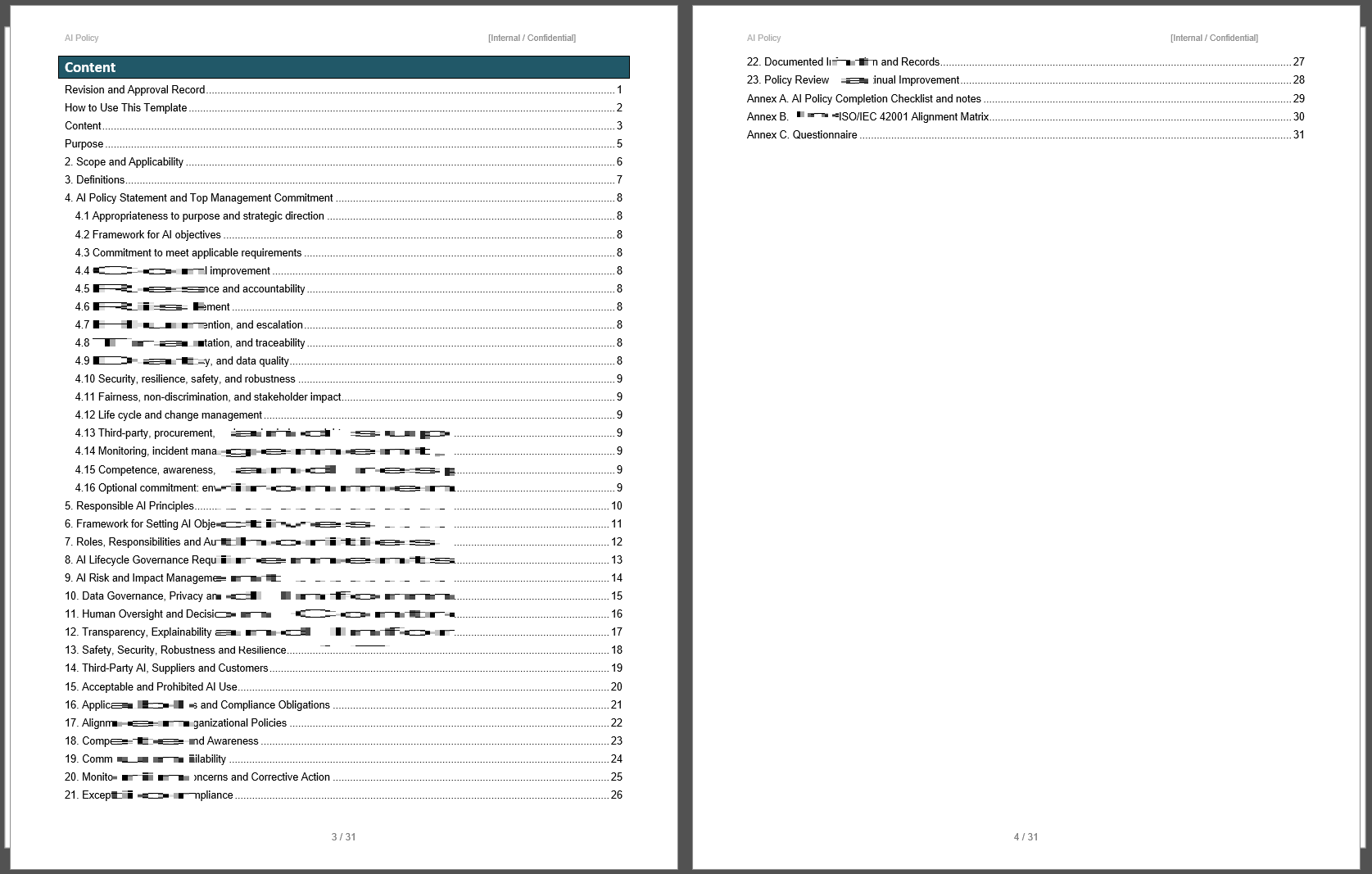

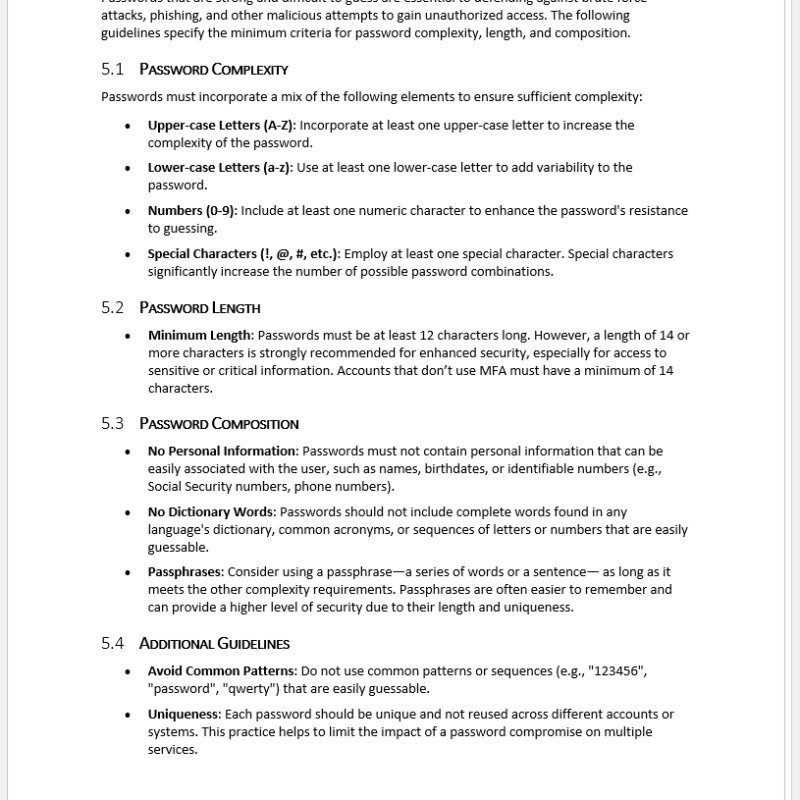

The ISO/IEC 42001 AI Policy Template includes the following major sections:

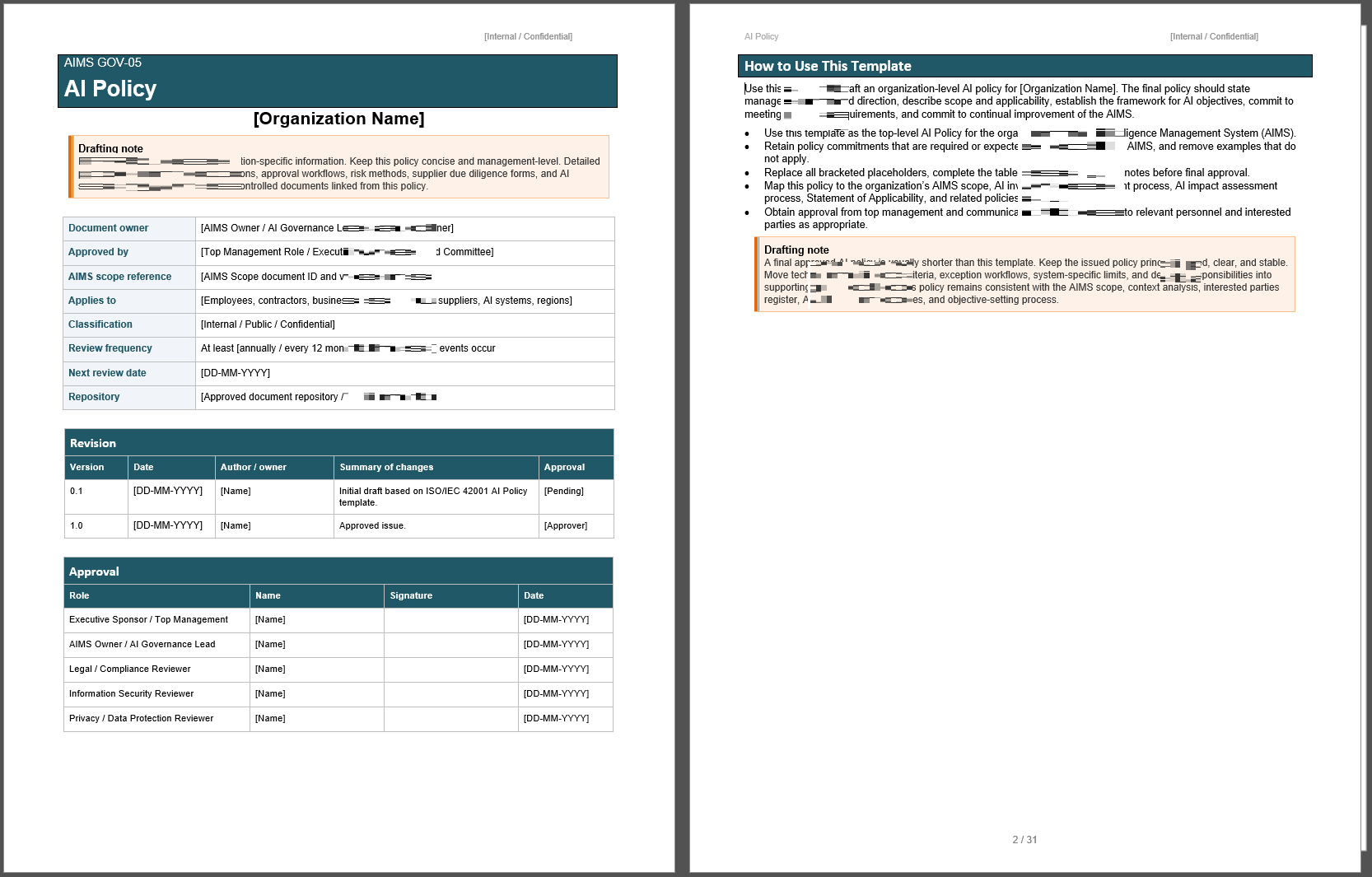

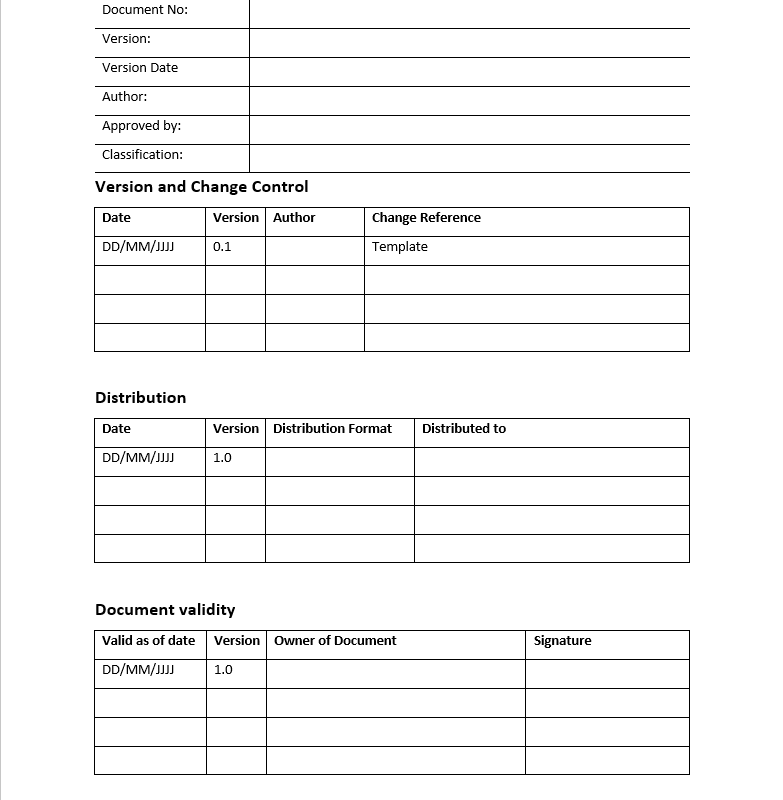

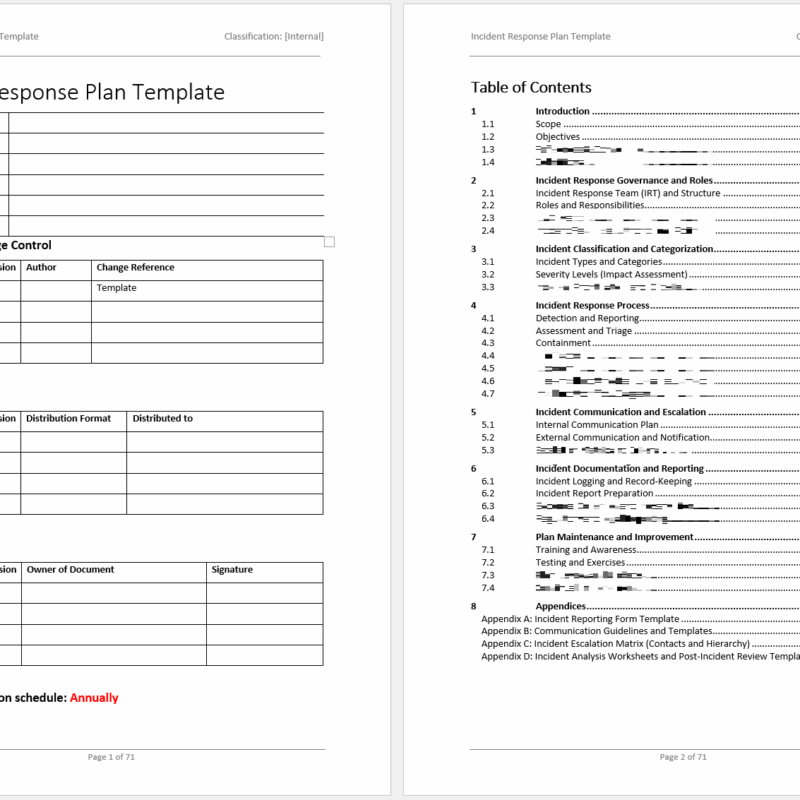

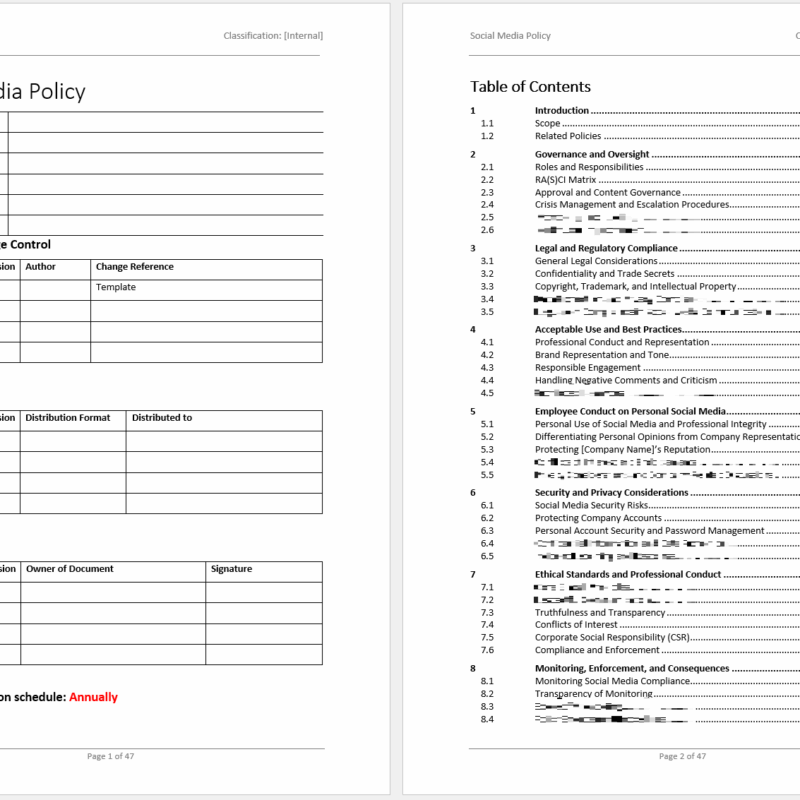

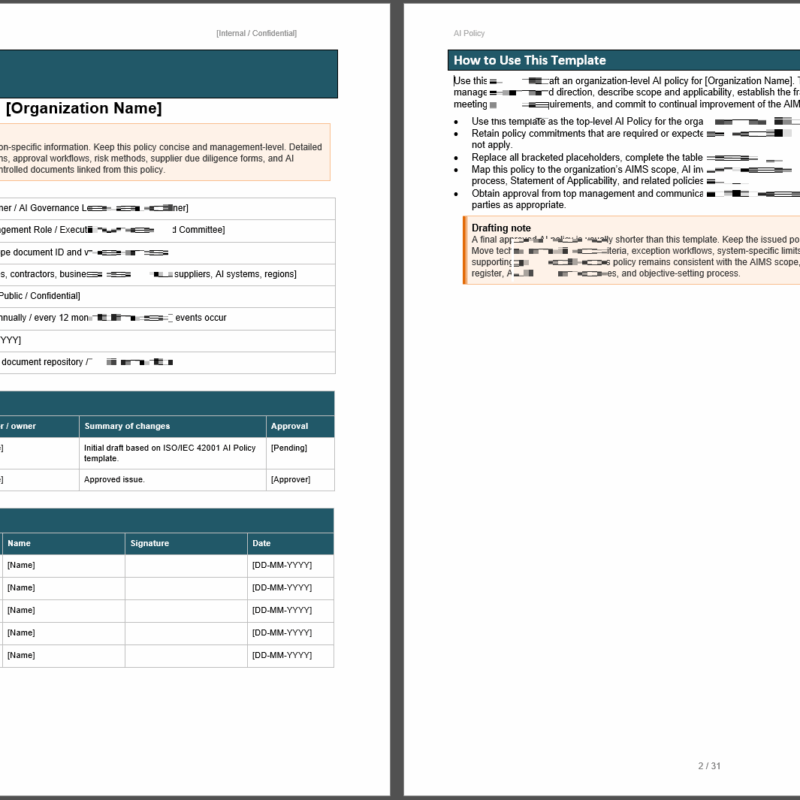

Document Control

Includes fields for policy title, document owner, approver, version number, effective date, review date, classification, approval status, and revision history. This helps organizations manage the policy as a controlled document.

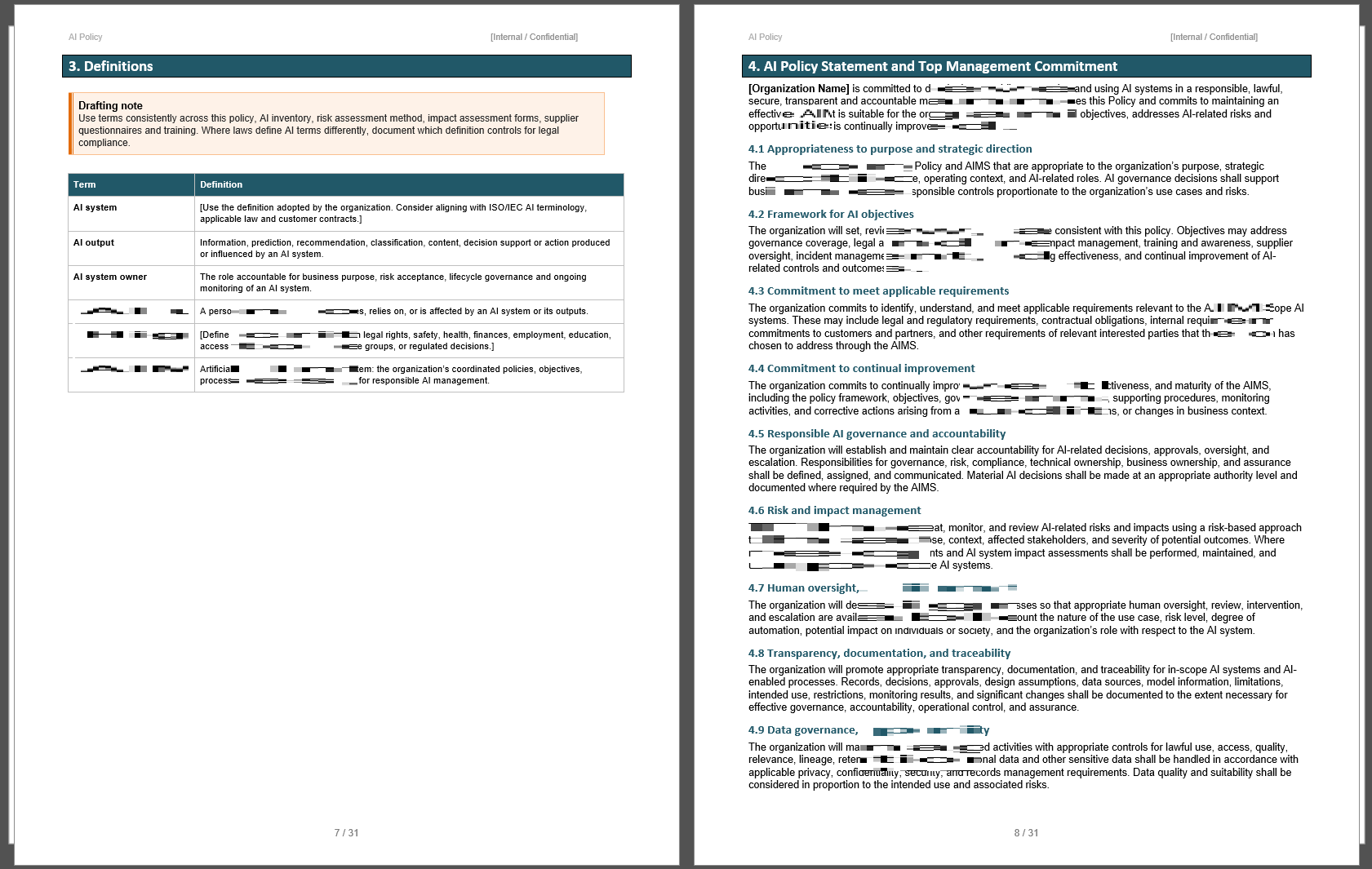

Policy Statement

Provides a formal statement of the organization’s commitment to responsible AI governance, risk management, compliance, transparency, and continual improvement.

Purpose

Explains why the AI Policy exists and what it is intended to achieve.

Scope

Defines the systems, users, departments, activities, suppliers, and AI use cases covered by the policy.

Definitions

Includes space to define key AI governance terms such as AI system, AI lifecycle, AI risk, AI owner, user, supplier, training data, output, human oversight, and impact assessment.

AI Governance Principles

Provides a set of principles for responsible AI, including accountability, transparency, fairness, privacy, security, safety, reliability, robustness, explainability, and human-centric use.

AI Objectives

Includes a framework for setting AI governance objectives, such as reducing unmanaged AI use, improving risk assessments, increasing awareness, strengthening vendor controls, and improving monitoring of AI systems.

Roles and Responsibilities

Defines responsibilities for senior leadership, AI governance teams, AI system owners, risk and compliance teams, information security, privacy teams, procurement, technical teams, business users, and third-party providers.

AI System Lifecycle Requirements

Covers policy requirements for AI system planning, design, development, testing, approval, deployment, operation, monitoring, maintenance, change management, and retirement.

AI Risk and Impact Management

Includes requirements for identifying, assessing, documenting, treating, and reviewing AI-related risks and impacts.

Data Governance

Addresses data quality, data protection, privacy, security, consent, lawful basis, data minimization, data accuracy, training data, validation data, and data retention considerations.

Human Oversight

Defines expectations for human review, intervention, escalation, approval, and accountability where AI systems support or influence decisions.

Transparency and Explainability

Provides policy requirements for communicating AI use, documenting system purpose, explaining AI outputs where appropriate, and maintaining transparency with relevant stakeholders.

Security and Resilience

Includes requirements for protecting AI systems, data, models, outputs, integrations, and related infrastructure against misuse, unauthorized access, manipulation, or failure.

Third-Party AI Systems

Covers supplier due diligence, contractual expectations, vendor risk assessment, performance monitoring, data protection, service changes, and accountability for outsourced or externally provided AI systems.

Acceptable Use of AI

Defines examples of acceptable AI use, such as productivity support, research assistance, analysis, content drafting, automation, decision support, and internal process improvement, subject to appropriate controls.

Prohibited or Restricted Use of AI

Includes examples of AI uses that may be prohibited or require additional approval, such as processing sensitive data without authorization, making high-impact decisions without human oversight, generating misleading content, bypassing security controls, or using unapproved AI tools for confidential information.

Training and Awareness

Requires employees and relevant stakeholders to receive appropriate guidance on AI responsibilities, approved tools, risks, escalation routes, and policy expectations.

Monitoring, Review, and Continual Improvement

Includes requirements for periodic review of AI systems, policy effectiveness, incidents, risk assessments, control performance, user feedback, and improvement actions.

Non-Compliance

Defines how violations of the AI Policy may be reported, investigated, escalated, and addressed.

Approval and Sign-Off

Includes an approval section for authorized management review and sign-off.

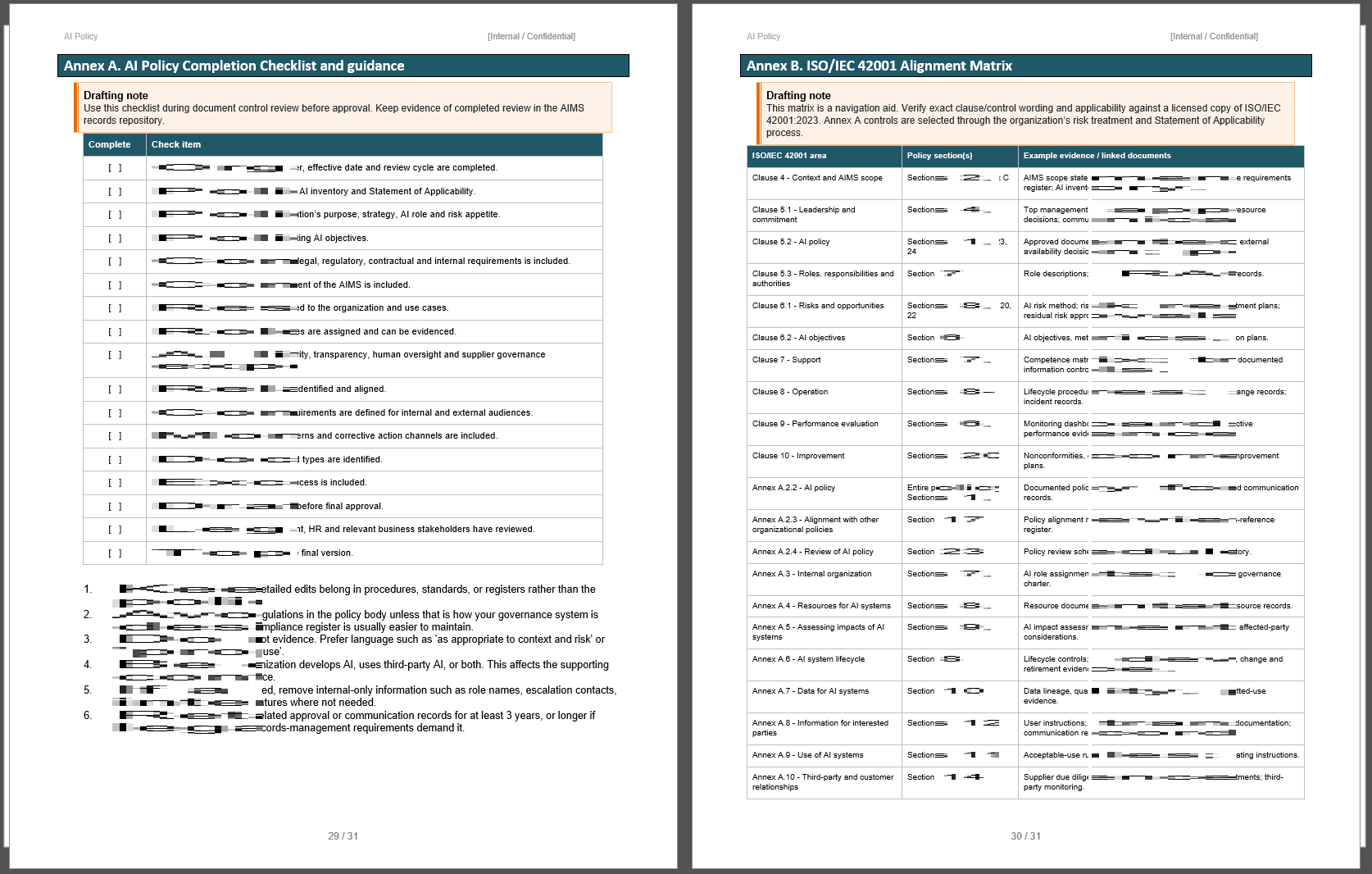

Completion Checklist

Provides a practical checklist to help users confirm that the policy has been reviewed, tailored, approved, communicated, and linked to relevant AI governance processes.

Questionnaire

Includes questions to help organizations adapt the policy to their own AI environment, such as what AI systems are used, who owns them, what data is processed, what risks exist, which laws apply, and what approvals are needed.